Sovereign Inference Is Not Sovereign Memory

Mistral Medium 3.5 runs on 4 GPUs. That solves inference — not where your knowledge lives, who controls it, or how to prove it under the EU AI Act.

Mistral just put a frontier model on 4 GPUs. That solves inference. It doesn't solve where your data lives, who controls it, or whether you can prove it.

A self-hosted model without self-hosted memory is security theater. On April 29, Mistral released Medium 3.5: 128 billion parameters, 256,000-token context window, open weights under modified MIT, deployable on four H100 GPUs. The internet shrugged. It is neither the cheapest model nor the highest-scoring one. But for European enterprises under the EU AI Act, the question was never which model is the best. It was whether any frontier model exists that fits their compliance posture. Mistral just answered that. The harder question remains unanswered: what about everything else in the stack?

What four GPUs solve and what they do not

The specs are real. Mistral Medium 3.5 scores 77.6% on SWE-Bench Verified and 91.4% on the Tau-3 Telecom agentic benchmark. It replaces three predecessor models (Medium 3.1, Magistral, and Devstral 2) into a single set of weights with configurable reasoning effort. At FP8 precision, four H100 80GB GPUs carry it in production. At 4-bit quantization, a Mac Studio with 128 GB unified memory runs it on a desk for $3,500.

This is a genuine shift. Twelve months ago, self-hosting a frontier-class model meant a cluster. Now it means a single server. For the cost of a mid-range car, a regulated European company can run inference entirely on its own hardware, under its own jurisdiction, without a single API call leaving the building.

The AI market noticed and did not care. Decrypt reported the release with the headline “the internet is not impressed.” Hacker News threads were short. The benchmark crowd was right to be unimpressed. Alibaba’s Qwen 3.6, Zhipu’s GLM, and DeepSeek V4 dominate the open-weight leaderboards at lower cost. Pedro Domingos put it plainly: most AI companies brag about how much better their models are; Chinese open-source labs brag about how much cheaper they are; Mistral is doing neither.

But the benchmark crowd is answering the wrong question. HSBC did not sign a multi-year deal with Mistral because of leaderboard position. The French military did not deploy Mistral on an air-gapped supercomputer inside a nineteenth-century fortress because of price-performance ratios. They did it because for their risk profile, no other option existed.

Mistral just put a frontier model on 4 GPUs. That solves inference. It doesn’t solve where your data lives, who controls it, or whether you can prove it.

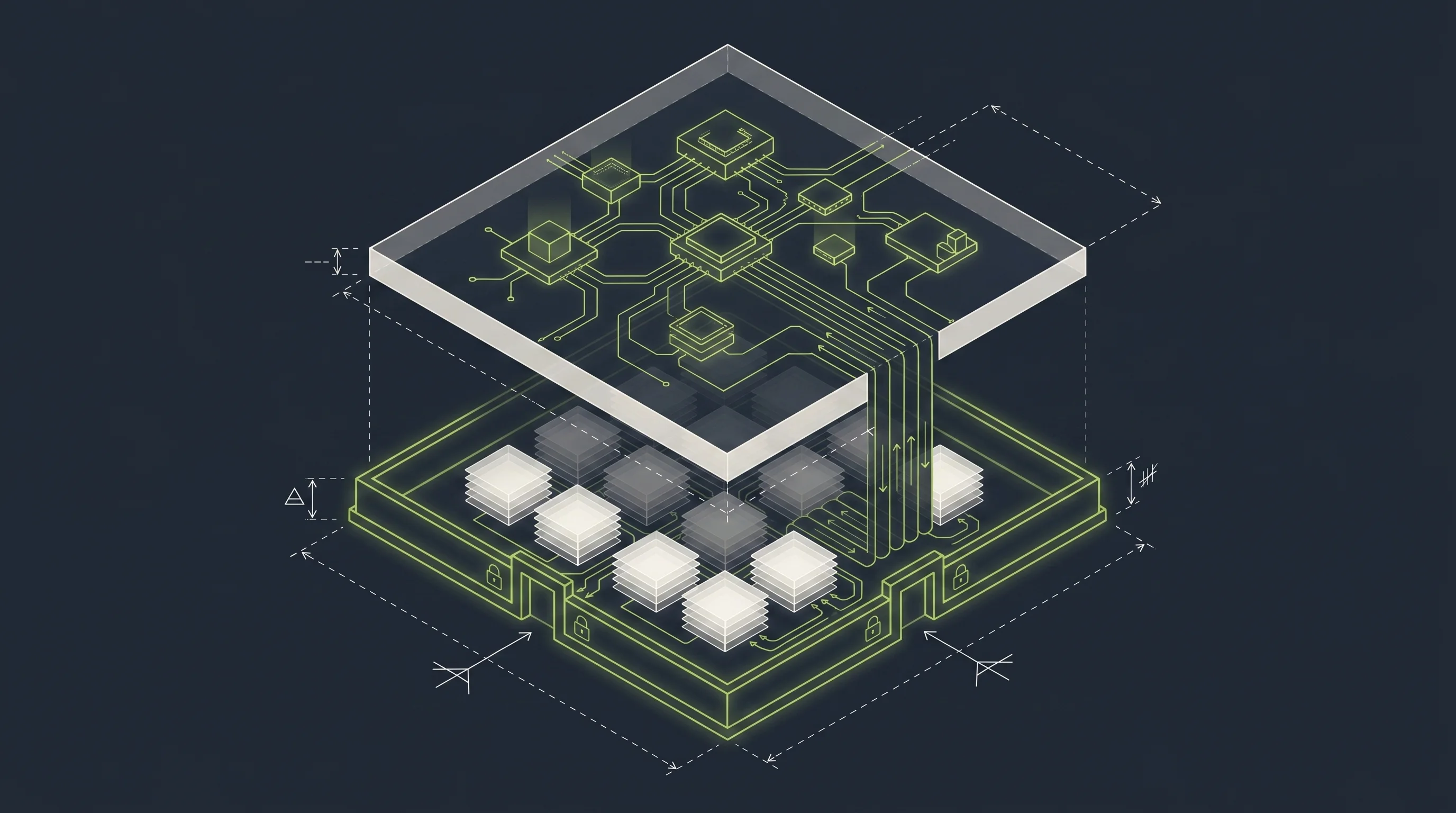

Inference is one layer. In a production AI system, one that answers questions about contracts, compliance memos, customer records, and institutional knowledge, the model touches data that flows through retrieval pipelines, embedding stores, knowledge graphs, document processors, and audit logs. That entire surface is the actual perimeter. Self-hosting the model and routing your documents through Pinecone, Weaviate Cloud, or any vector database outside your jurisdiction is sovereignty at the front door and a data leak at the back.

Three levels of sovereignty theater

The EU AI Act becomes fully applicable on August 2, 2026. Eighty-nine days from today. Articles 7 through 15 define high-risk system requirements. Deployers must implement human oversight, monitor performance, maintain records, report incidents. Fines reach EUR 35 million or 7% of worldwide turnover. The regulation does not ask which model you use. It asks whether you can prove, at any point in time, what data entered the system, which model processed it, and what controls governed the flow.

Most enterprises preparing for this deadline are stuck at one of three levels. Each looks like progress. None of them is complete.

Level 1: “We use Azure OpenAI in EU region.” The model runs on Microsoft hardware in an EU data center. The provider is a US company under the CLOUD Act. US authorities can compel data disclosure regardless of server location. You cannot inspect the model weights. You cannot audit the system end-to-end. You cannot prove to a regulator that the data flow stays within European jurisdiction, because it is governed by US law. This is not sovereignty. It is geographic coincidence.

Level 2: “We self-host Mistral. Compliance solved.” The model runs on your GPUs. Good. But your enterprise documents flow through a hosted embedding service. Your vector store is a managed cloud database. Your retrieval pipeline calls an external API for reranking. The model is sovereign. The knowledge layer, the part that actually contains your sensitive data, is not. You control inference. You do not control memory. Guillaume Lample, Mistral’s co-founder, said it directly: “90% of companies cannot effectively use an open-source model checkpoint without significant help.” The help most of them reach for is a cloud-hosted RAG stack. That is where sovereignty breaks.

Level 3: “Everything is on-premise.” The model is local. The vector store is local. The documents stay in the building. But there is no engine abstraction. The system is locked to one model, one retrieval backend, one set of assumptions. When the risk profile changes (a new use case requires a different model, a new regulation requires a different data residency), the entire stack has to be rebuilt. There is no audit trail that records which data reached which model. There is no mechanism to route different workloads to different engines based on sensitivity. The infrastructure is sovereign. The architecture is not.

Memory is the actual perimeter

Here is the part that the current sovereignty discussion misses: the model is fungible. You can swap Mistral for Llama, for a fine-tuned variant, for whatever scores highest on your internal eval next quarter. The model is a commodity that processes tokens. It does not store your data. It does not know your business.

Your memory layer does. The knowledge graph that maps your organizational structure. The embedding store that indexes fifteen years of contracts. The retrieval pipeline that surfaces the right compliance memo when an agent needs it. The document processor that ingests board minutes. This is where your institutional knowledge lives. This is what the EU AI Act actually asks you to control.

Memory is harder to swap than a model. It accumulates over months and years. It represents the specific shape of your organization’s knowledge. When it sits outside your perimeter, a self-hosted inference engine is protecting an empty room. The valuables left through the back door at ingestion time.

HSBC understood this. Their press release specifies self-hosted models operating on HSBC’s internal technology systems: not just the model, but the screening, the audit trails, the compliance reviews, all kept close to core systems. The French military understood this further. Their ASGARD supercomputer in the Mont-Valrien fortress is completely air-gapped. No public internet connection. The model, the memory, the training data, the operational documents, everything inside one physical perimeter. That is sovereign AI. Not “we self-host the model and use a cloud RAG service.”

The question for CTOs and CISOs preparing for August is not whether to self-host Mistral. It is whether your memory layer, the part that holds what your business actually knows, answers to the same jurisdiction as your inference engine.

The architecture that makes this a choice, not a migration

The honest answer is that not every workload needs full sovereignty. An internal FAQ bot that answers questions about the cafeteria menu does not need air-gapped infrastructure. A system that processes credit applications under Annex III of the AI Act does. The same organization runs both. The architecture has to support both. The decision about which workload gets which deployment should be a configuration choice, not a rebuild.

This is what engine abstraction exists for. A platform where the inference engine is swappable (API-based for low-risk, self-hosted Mistral for high-risk) and where the memory layer stays sovereign regardless. Where audit trails record data flows per request. Where the decision “this workload runs on-premise” is a routing rule, not a six-month infrastructure project.

Enchilada is built on this principle. Multi-engine RAG with a narrow proxy interface. Workspaces that isolate tenants and workloads. Audit logs that answer the question a regulator will ask: what data entered this system, which model processed it, under which controls. The memory layer (document ingestion, embedding, retrieval, knowledge representation) stays under the deployer’s control. EU-hosted or on-premise. No data leaves the perimeter unless the configuration explicitly routes it elsewhere.

This is not about replacing Mistral or competing with it. Mistral solved the inference problem: a frontier model that runs on four GPUs, open weights, European origin. The memory problem (where your enterprise knowledge lives, who can access it, whether you can prove the data flow to a regulator) is a different layer. Solving one without solving the other is half a stack. And the EU AI Act does not grade on a curve.

The board question

The three questions that belong on your AI governance agenda this week:

First: where does your memory layer run? Not your model. Your documents, your embeddings, your knowledge graph. Which jurisdiction governs it? Which provider operates it? Can you switch providers without losing the knowledge that has accumulated?

Second: can you prove the data flow? If a regulator asks today which customer records entered your AI system and which model processed them, can you answer? Not in theory. In production. With timestamps.

Third: do you have the option? Not “is everything on-premise.” That is the wrong question for most workloads. The real question: if a use case moves from low-risk to high-risk classification tomorrow, can your architecture absorb that change as a configuration switch? Or does it require a new stack?

If the answers are unclear, the self-hosted model is protecting an empty room. The work is in the memory layer. That is where the data lives. That is where sovereignty either holds or breaks.

Sources

- blog Mistral Medium 3.5 Launched: What It Means for Self Hosted AI Infrastructure

- docs High-level summary of the AI Act

- blog HSBC and Mistral AI join forces to accelerate AI adoption across global bank

- blog France Deploys Mistral AI Across Military to Accelerate Operational Decision-Making

- blog Building and Deploying Enterprise-Grade LLMs: Lessons from Mistral

- blog Self-Host Mistral Medium 3.5: vLLM, SGLang & GPU Guide

- blog EU AI Act 2026: Key Compliance Requirements for Enterprises